Computer Systems Research

What are Computer Systems?

Computer systems are integrated collections of hardware and software that work together to perform computing tasks efficiently and reliably. This includes physical devices like processors, memory, and storage, operating systems that manage resources, programming tools that enable software development, and networks that connect computers.

Computer systems power everything from personal devices to large-scale data centers, making modern technology faster, more secure, and energy-efficient.

Areas of Focus

Our computer scientists lead innovative research projects to:

- Optimize program analysis and compiler technologies to improve software efficiency and reliability

- Design parallel, distributed, and mobile computing systems that enable multiple processors and devices to work together effectively

- Develop cluster-based server technologies for scalable, high-performance computing solutions

- Innovate low-power hardware and software to enhance energy-efficient computing

- Explore processor and memory architectures to boost computing speed and reliability

- Investigate concurrency and synchronization techniques to improve system stability during multitasking

- Create advanced programming environments and design new programming languages for better software development

- Enhance operating systems for efficient hardware resource management

- Advance networking and storage systems to support fast, secure communication and data handling

- Strengthen cybersecurity to protect systems and data from threats

Powering Practical Solutions

Computer Systems Research at Rochester

At the University of Rochester, computer systems research combines practical engineering solutions with strong theoretical foundations. Researchers collaborate closely across departments and with industry partners, addressing real-world challenges in cloud computing, big data, cybersecurity, and high-performance computing within a supportive academic environment.

Computer Systems Researchers

Meet the faculty at the forefront of computer systems research.

Criswell, John

Associate Professor of Computer Science

- Office Location

- 3405 Wegmans Hall

- Telephone

- (585) 275-1118

- Web Address

- Website

Interests: Computer Security; Automatic compiler transformations; Secure Virtual Architecture

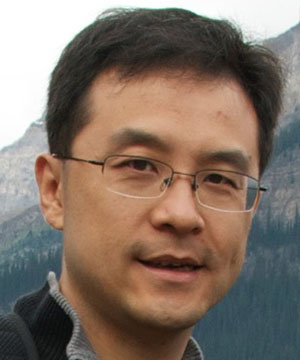

Ding, Chen

Professor of Computer Science

Chair, Department of Computer Science

- Office Location

- 3407 Wegmans Hall

- Telephone

- (585) 275-1373

- Web Address

- Website

Interests: Locality theory and optimization; Compilers and run-time systems to improve locality and parallelism; Memory management; Parallel programming; High-performance computing

Dwarkadas, Sandhya

Visiting Research Professor of Computer Science

- Web Address

- Website

Interests: Parallel and distributed computing; Computer architecture and networks; Interaction and interface between the compiler, runtime system, and underlying architecture; Software distributed shared memory; Integrated compiler and runtime support for parallelism; Simulation methodology; Uniprocessor and multiprocessor architectures; Parallel applications development; Performance evaluation

Guo, Yanan

Assistant Professor of Computer Science

- Office Location

- 3403 Wegmans Hall

- Web Address

- Website

Interests: Microarchitectural side channels; Software security; Machine learning security; GPU systems and architectures; Memory systems

Heinzelman, Wendi

Professor of Electrical and Computer Engineering

Professor of Computer Science

John and Barbara Bruning Dean, Hajim School of Engineering and Applied Sciences

- Office Location

- 309 Lattimore Hall

- Telephone

- (585) 273-3958

- Web Address

- Website

Interests: Wireless sensor networks; Mobile ad hoc networks; Multimedia communication; Heterogeneous networking; Cloud computing

Huang, Michael

Professor of Electrical and Computer Engineering

Professor of Computer Science

- Office Location

- 414 Computer Studies Building

- Telephone

- (585) 275-2111

- Web Address

- Website

Interests: High-performance and energy-efficient computer microarchitecture; non-von Neumann computing; Ising machines

Nargesian, Fatemeh

James P. Wilmot Distinguished Assistant Professor in the Department of Computer Science

Assistant Professor of Computer Science

- Office Location

- 3015 Wegmans Hall

- Web Address

- Website

Interests: Data management ; Data science ; Data discovery and integration

Pai, Sreepathi

Associate Professor of Computer Science

- Office Location

- 3409 Wegmans Hall

- Telephone

- (585) 276-2391

- Web Address

- Website

Interests: Compilers; Heterogeneous Architectures; GPU algorithms; Performance Modeling

Scott, Michael L.

Arthur Gould Yates Professor of Engineering

Professor of Computer Science

Former Chair, Department of Computer Science

- Office Location

- 3401 Wegmans Hall

- Web Address

- Website

Interests: Systems software for parallel and distributed computing; Programming languages; Operating systems; Synchronization; Transactional memory; Persistent memory

Zhu, Yuhao

Associate Professor of Computer Science

- Office Location

- 3501 Wegmans Hall

- yzhu@rochester.edu

- Web Address

- Website

Interests: Computer Systems and Architecture; Computer Imaging and Graphics; Augmented/Virtual Reality; Human (Visual) Perception and Cognition; Computational Art, Art History, and Aesthetics